Biological Embodiment and Artificial Simulation

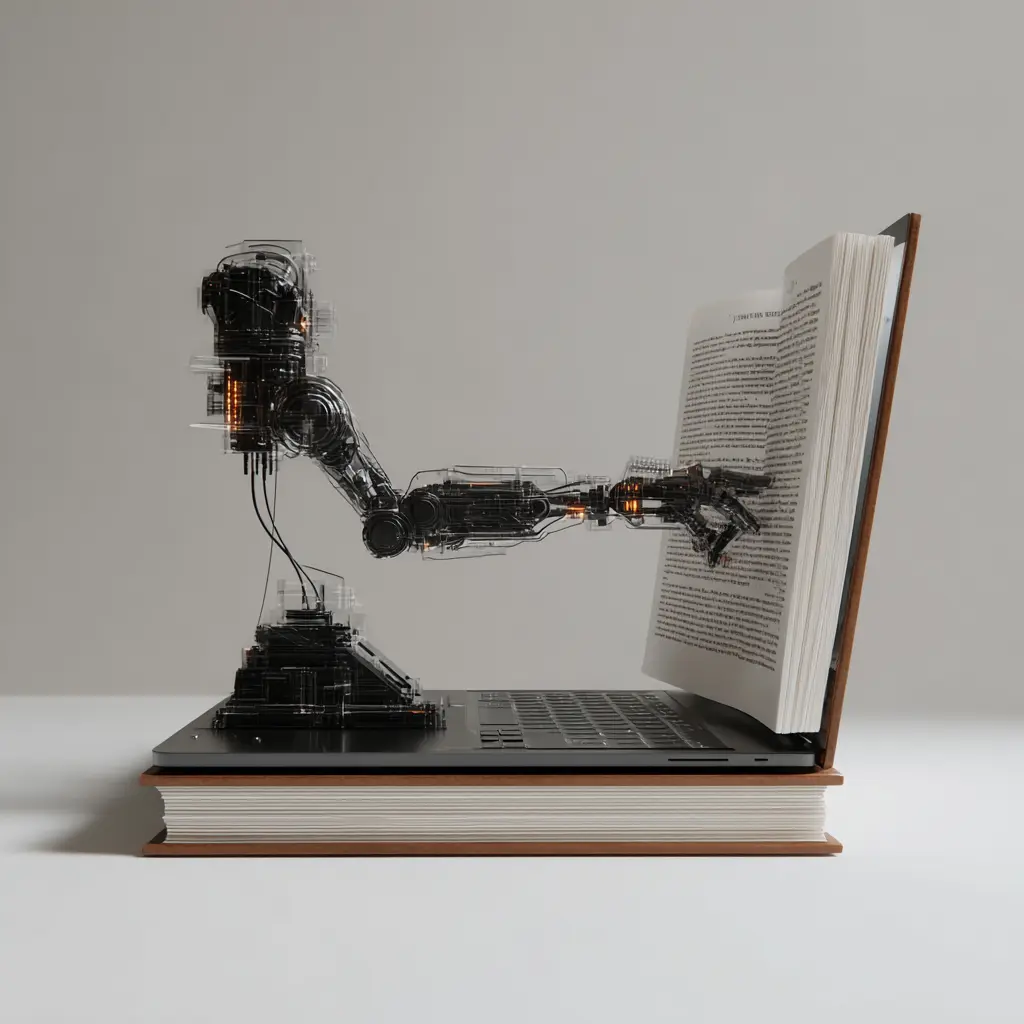

As we move toward the final years of this decade, the integration of agentic AI into global commerce has triggered a profound psychological shift. We are no longer simply using tools; we are interacting with "synthetic entities" that mimic human reasoning, speech, and even emotion. This phenomenon (the simulation of life) is not a recent invention of the silicon era. It is a continuation of a human drive that dates back to the clockwork marvels of the 18th century, such as the Bowes Museum Swan Automaton. The Swan was a philosophical statement, utilizing a hidden symphony of internal gears and levers to create a fluid, lifelike Zoomorphic illusion that suggested a preening, searches for food, and biological intent.

However, for the modern enterprise, the danger lies in mistaking this convincing simulation for actual agency. At Archificials, we believe that understanding the "Reality Gap", the chasm between performance in simulation and performance in the real world, is the first step toward reclaiming human intent in an automated society.

The Phenomenological Chasm: Biological vs. Artificial Embodiment

There is a vast, arguably unbridgeable, difference between biological embodiment and artificial embodiment. For a human, the body is the seat of phenomenological experience, the qualitative "feel" of light, fatigue, or urgency. In contrast, embodied AI processes the world as a series of quantitative data streams. Where a human "feels" the weight of a material, an AI processes numerical current from a strain gauge.

Half a century ago, the philosopher Thomas Nagel famously argued in his essay that an organism has conscious mental states if and only if there is something it is "like to be" that organism. While modern AI systems can reasonably lay claim to intelligence that they can solve complex problemsthey emphatically lack consciousness. They do not possess a "point of view" from which they apprehend their own actions

The Anthropomorphic Trap: Comfort vs. Control

We are hardwired to see intent in moving shapes and wisdom in polite language. This tendency is being exploited in the 2026 marketplace through "Voice AI" and "Digital Assistants" that utilize human-like disfluencies (such as "um" or "uh") to trigger our social brains.

Research from the University of Cambridge highlights a critical trade-off for business leaders. While there is comfort in dealing with a human-like AI agent, the resulting "lost autonomy" can lead to a sense of worthlessness among employees. When we anthropomorphize a system, we risk:

- Overtrust: Assigning moral responsibility to a statistical model that remains indifferent to outcomes.

- Cognitive Offloading: Surrendering our strategic judgment to a machine that optimizes for patterns rather than meaning.

- The Identity Crisis: Blurring the line between tool and companion, which can entrench psychological delusions or "AI psychosis."

Strategic Intent: From Simulation to Agency

At Archificials, we approach AI design with Interdisciplinary Humility. Our systems, such as Mentor, are built as Socratic partners rather than magicians. Mentor does not pretend to be "human"; it uses guided dialogue to force users to articulate their own reasoning. This preserves the cognitive sovereignty of the user, ensuring that the machine supports the growth of human intelligence rather than simulating its replacement.

In a world saturated with synthetic media and deepfakes, the winning brands of 2026 will be those that reject the anthropomorphic "sleight of hand" and instead design for interpretability, accountability, and the preservation of human craftsmanship.

FAQ

What is the primary difference between biological and artificial embodiment?

Biological embodiment is a subjective, phenomenological experience where sensations like thirst or warmth carry intrinsic meaning. Artificial embodiment is functionalist, relying on quantitative data streams (such as LiDAR or RGB arrays) where the AI processes numerical patterns to maximize a reward signal without any internal "feeling" or understanding of the physical state.

Why do humans instinctively attribute human traits to AI systems?

Humans are hardwired for social connection and utilize "evoked agent knowledge" to interpret unknown objects. Because AI systems use natural language and provide helpful responses, we subconsciously imbue them with intent and emotions. This tendency dates back to ancient anthropomorphism, where natural forces were attributed spiritual qualities.

What are the risks of using human-like AI interfaces in a business context?

The major risks include overtrust, where users rely blindly on indifferent systems, and the erosion of employee autonomy. If an interface feels too human, workers may experience a sense of worthlessness or "cognitive offloading," where they stop exercising the critical thinking necessary to validate the machine's outputs.

How does Thomas Nagel's "bat" argument apply to artificial intelligence?

Nagel’s argument states that consciousness exists only if there is "something it is like to be" that organism. While AI demonstrates high-level intelligence (doing things), it lacks phenomenal consciousness (being something). We can study AI's code and behavior, but there is no inner subjective life or "point of view" for the machine to experience.

Can an autonomous AI agent ever be held morally responsible for its actions?

No. Moral agency requires self-reflection, empathy, and the capacity for suffering, none of which exist in algorithms. An AI that performs incorrectly has not made an ethical "mistake"; it has failed to meet a parameter. Responsibility resides solely with the designers, users, and the institutional frameworks that deployed the system.